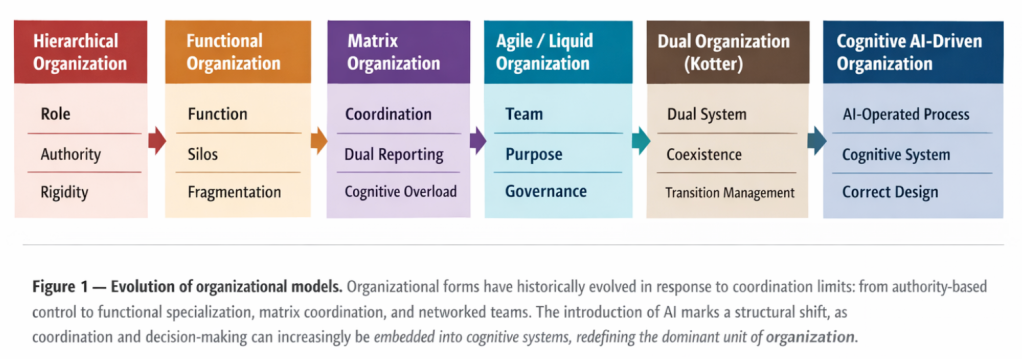

Over time, organizations have evolved not only in structure but in the basic unit around which work is coordinated. Each dominant organizational model emerged as a response to concrete limits of control, specialization, coordination and adaptation, rather than as a management fashion. As Alfred D. Chandler showed, organizational structure is never neutral: it reflects the organization’s real strategy, not its declared intentions.

Later work, particularly by Henry Mintzberg, expanded this view by showing how organizations stabilize around distinct structural configurations.

The introduction of AI disrupts a premise shared by all these models: that work, decision-making and coordination are inherently human. This shift does not result from task automation alone, but from the emergence of non-human coordination and decision capabilities, forcing a reassessment of what constitutes the dominant unit of the organization.

From this perspective, organizational evolution can be understood as a progressive displacement of structural load — from hierarchical authority to human coordination and increasingly toward cognitive operations assisted or executed by AI systems.

Raúl García Vega

Pillars of future organizational design

The adoption of AI within organizations is no longer a matter of expectation or isolated experimentation, but a growing operational reality. As with previous technological shifts, its impact extends beyond the creation of new roles, forcing a reassessment of how work is organized and how decision-making is governed.

Any attempt to design AI-enabled organizations fails if two fundamental design pillars are not properly understood.

Universal rules of human organizational design

These rules do not constitute methodologies or best practices in an operational sense. They are direct consequences of the limits of language, attention and human cognition. When they are ignored, organizations tend to generate structural noise, loss of focus and apparent hierarchies that fail to resolve the problems they are meant to manage (Chandler; Mintzberg; Thompson; Miller).

- Rule 1. If you want an area to be strategic, give it the importance it deserves. What is strategic is structurally embedded through decision power, resource control and access to priority-setting forums; structure reveals real strategy, not rhetoric.

- Rule 2. If you want continuity in a process, unify; if you want specialization, segregate. Continuity requires end-to-end accountability and a single decision chain, while specialization demands functional separation; mixing both without an explicit trade-off leads to fragmentation.

- Rule 3. No executive should have more than seven direct reports. Human capacity for monitoring and decision-making is structurally limited; increasing direct reports increases noise rather than control.

- Rule 4. Every responsibility must have a single identifiable owner. Shared responsibility dilutes accountability, risk ownership and learning, becoming functionally equivalent to having no owner.

- Rule 5. If a problem requires constant coordination, the structure is poorly designed. Persistent coordination signals mislocated decisions or fragmented responsibilities, as effective structures shift complexity to the design phase.

The concept of cognitive friction

In the context of AI, friction refers to the degree of human intervention, attention and validation deliberately retained in the use of a system. It does not describe a technical inefficiency, but a design choice aimed at ensuring control, understanding and accountability in human–AI interaction.

This friction emerges when AI systems do not operate in a fully autonomous manner, but instead support decision-making, expert judgment or contextual interpretation. Unlike traditional friction — associated with bureaucracy, rework or poor coordination — cognitive friction in AI systems is a direct consequence of how autonomy, responsibility and human oversight are intentionally configured.

Friction should therefore not be systematically eliminated. In stable and highly standardizable processes, reducing friction enables efficiency and technical autonomy. In contexts characterized by ambiguity, elevated risk or significant impact, maintaining friction becomes a conscious design decision to preserve human judgment and decision traceability.

Cognitive friction can take multiple forms without entering operational detail: temporal friction (deliberate delays or validation windows), scope friction (limitations on the system’s domain of action or decision thresholds), functional friction (separation between generation, validation and authorization) and technical friction (controls, explainability, traceability or manual intervention mechanisms).

As a result, cognitive friction becomes a central variable in organizational design, determining when AI systems can operate autonomously and when they must function as cognitive guides, directly shaping roles, supervision and the organization of work.

Operations management and AI

The analysis developed in this article draws on a set of complementary perspectives that together point to a deeper organizational shift. Debates around the Chief AI Officer, articulated in MIT Sloan Management Review and synthesized by IMD, frame the CAIO as a transitional role — useful for structuring early AI adoption but structurally unstable if it crystallizes as a permanent silo. A similar pattern emerges in Harvard Business Review’s analysis of the Chief Data Officer, showing how data governance alone becomes insufficient once value no longer lies in data quality itself, but in the activation of decisions within real operational contexts.

From a complementary perspective, work published by MIT Sloan Management Review and McKinsey & Company highlights the growing convergence between the CIO and the COO. The former evolves toward the design of decision capabilities and cognitive platforms, while the latter becomes the point where AI either becomes operational — or fails — through the recombination of humans, processes and AI systems.

Taken together, these perspectives suggest that AI is not merely reshaping executive titles or org charts but displacing the organization’s centre of gravity toward a cognitive system. The challenge is therefore no longer how to redefine individual C-level roles in isolation, but which organizational structure allows technology, data, operations and decision-making to be coherently integrated once AI begins to operate processes and decisions directly.

Phase 1: Unified AI strategy leadership

By integrating architectural foundations, data and model governance, end-to-end process redesign and organizational transition, this structure becomes qualitatively different from an expanded CIO or a reinforced CDO. Its mandate is to transform processes end-to-end and to decide, in an integrated manner, what is automated, what is supervised and what remains under human judgment.

AI operations operates through a lifecycle-oriented squad model rather than permanent functional coverage. Squads are activated to transform processes and dissolve once stability is reached.

Raúl García Vega

- Analysis & design squads decompose processes, identify automation opportunities versus activities requiring human supervision and design human-AI interaction, including controls, exceptions and metrics.

- Deployment & organizational transition squads manage role changes, adoption and governance.

- Build squads, specialized by business domain, develop solutions during the transformation phase without becoming permanent teams.

Phase 2. Structural reconfiguration of affected areas

Once a process enters the transformation radius, its organizational structure evolves progressively. Functional CxOs may remain as accountability references, but execution-centred hierarchies lose weight in favour of outcome-oriented models and cognitive control. In practice, most areas operate in hybrid modes, combining traditional work with AI-supported cognitive operating models depending on process type, standardization and risk.

Three human roles become central. The Process Owner holds end-to-end accountability for outcomes and system performance. The AI Output Supervisor validates results, monitors quality, bias, compliance and security, and adjusts operational criteria as contexts change. The AI Operator orchestrates agents and workflows, manages exceptions and improves system behaviour in production.

The resulting operating model shifts human effort away from execution and toward design, supervision and responsibility for cognitive systems.

Future organizations and conclusions

In a scenario of full AI deployment, the classic functional model would progressively lose viability as an operational structure. Organizations would move away from functional silos and human execution chains toward governance by results produced and evaluated by AI systems. Human responsibility would shift from execution to system design, supervision and control.

Under this configuration, organizational structures could be simplified significantly. The CEO would retain responsibility for vision and strategic narrative. The CFO would remain accountable for financial performance, risk and compliance. The COO would assume end-to-end process orchestration and the operation of the cognitive systems executing them. Other C-level functions would tend to be absorbed or transformed into embedded capabilities. The CIO role could dilute as infrastructure and platforms evolve toward standardized, integrated services, while the CDO could cease to exist as an autonomous function once data governance becomes inseparable from operations and the AI layer.

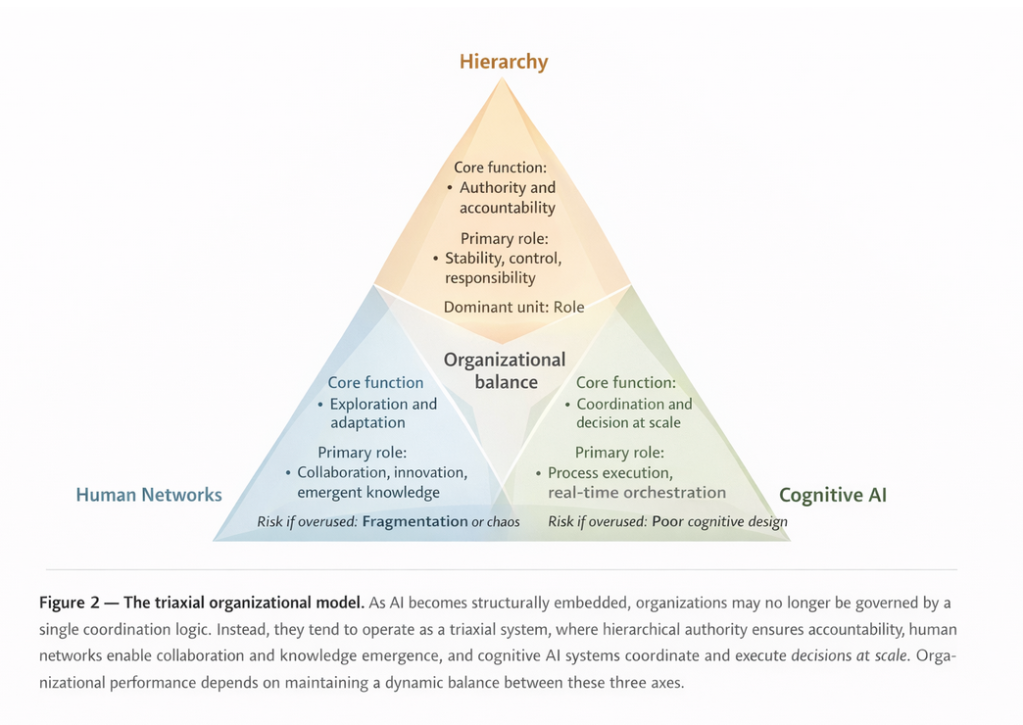

At this point, organizations could no longer be interpreted solely through hierarchical or dual models. Instead, they would tend to operate as a triaxial system. The hierarchical–functional axis would continue to provide stability, formal accountability and institutional control. The human network axis — described in dual operating system models, particularly by Kotter — would remain essential for exploration, innovation and adaptation under uncertainty. Alongside them, a third axis would emerge: a cognitive one, in which AI systems operate as a structural layer, stabilizing processes, orchestrating decisions and reducing distributed cognitive load.

Raúl García Vega

This triaxial organization does not describe a new org chart, but a dynamic balance among three forms of coordination: authority, human influence and artificial cognitive judgment. AI-driven triaxial organizations should be understood as a reference framework for interpreting how organizations may evolve once AI ceases to be a supporting tool and becomes a structural layer of the organizational system.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?