Scaling artificial intelligence (AI) from experimental pilots to integrated enterprise capabilities remains an arduous task for large, legacy organizations. Despite billions in investment, MIT’s NANDA report indicates a stark reality: “95% of organizations are getting zero return” on their AI initiatives. While data science teams focus on perfecting algorithms, a more dangerous gap is emerging for the business leaders and CIOs, a “trust gap” that keeps advanced capabilities trapped in pilot purgatory.

The problem is rarely the technology itself. As many IT leaders find, they may have AI models coming out of their ears, yet almost none are in production because the organization does not trust the autonomous output.

This lack of trust stems from a structural mismatch: our inherently static enterprise architectures and hierarchical operating models were optimized for a stable, human-only world. They lack the architectural mechanisms to oversee, delegate and manage the accountability of machine actors. This ‘trust gap’ is often justified by high-profile systemic failures like the 2023 Robodebt scheme, where automated logic was allowed to operate without necessary oversight, despite internal warnings that the underlying algorithm was legally flawed.

The strategic shift: From data management to decision architecture

Historically, IT leaders have focused heavily on building ‘data products’ and analytics to drive evidence-based results. However, data is only the fuel; in the age of autonomous agents, the engine is the logic of choice. Successfully bridging the trust gap requires a fundamental shift in focus: From merely managing data to explicitly architecting the decisions enabled by that data.

In most organizations, decisions are currently invisible, buried within legacy code or left implicit in human job roles. This lack of transparency is the primary reason why AI agents remain stuck in pilot programs. If an organization cannot define the specific logic, rules and constraints for a human actor, it cannot safely delegate them to a machine. Trimodal thinking-based agents can apply System 1 thinking (intuitive fast thinking, pattern-based neural network), System 2 (slow thinking, data-driven and rules-based) and System 3 (control, strikes a balance between System 1 and System 2).

The breakthrough here is that by architecting organizations specifically for AI, we fix a long-standing human problem: the lack of clear, actionable context in delegation. We move beyond the traditional principal-agent dilemma, where delegated choices diverge from corporate intent, by creating an explicit decision architecture. This shift allows us to move from rigid hierarchies to an integrated human-AI operating model, where both humans and agents collaborate with clear, inspectable accountability.

Bridging the trust gap requires more than a technology upgrade; it necessitates a fundamental transition from managing data to architecting decisions. The following comparison highlights how an organization’s architectural DNA must evolve to support a world where machines and humans share the logic of choice.

| Feature | Legacy operating model (before) | Decision-driven adaptive model (After) |

| Primary focus | The focus is on maintaining static risk compliance and control through deterministic rule-based software for value creation. | The primary object is the decision product, which bundles logic, data, ethics and rules into a single, transparent unit. |

| Object of control | Control, whether centralized, decentralized or federated, is focused on managing stable business processes and static data products. | Delegation logic is defined by explicit, formal contracts that provide humans with the clarity and transparency needed to safely delegate tasks to AI agents, including their oversight. |

| Delegation logic | Delegation remains implicit or manual buried within static job roles and rigid organizational charts. | Delegation remains implicit or manual, buried within static job roles and rigid organizational charts. |

| Governance mode | Oversight relies on episodic auditing and manual, point-in-time (static snapshot) compliance checks that lag behind real-time operations. | Humans maintain high situation awareness by focusing on strategic intervention and ethical steering. This is achieved through Human-in-the-loop interaction for final decision authority and Human-on-the-loop mechanisms for real-time oversight of autonomous agents. |

| Human role | Humans are responsible for manual task execution and episodic oversight of automated workflows. | Humans maintain high situation awareness, by focusing on strategic intervention and ethical steering. This is achieved through Human-in-the-loop interaction for final decision authority and Human-on-the-loop mechanisms for real-time oversight of autonomous agents. |

Sonia Boije, Asif Gill

Architecting the decision-driven enterprise

To bridge the trust gap and move AI from experimental pilots into reliable production, organizations must transition toward an integrated human-AI operating model. This approach transforms the black box of AI into a transparent and governable system by focusing on three essential architectural components that work in tandem to ensure machine actions remain aligned with human intent.

Sonia Boije, Asif Gill

The journey begins with the decision product, which serves as the fundamental unit of the new architecture. In the legacy world, IT leaders managed data products; in the agentic era, the focus must shift to managing the logic of choice. For instance, a Bank Loan Approval decision product does more than just run a calculation; it bundles the applicant’s data with explicit credit-scoring logic, regulatory fair-lending constraints and ethical rules designed to prevent bias. By treating this bundle as a single product, the organization can audit the AI agent’s reasoning as easily as a human’s, ensuring the final decision remains a governed and trustworthy business outcome.

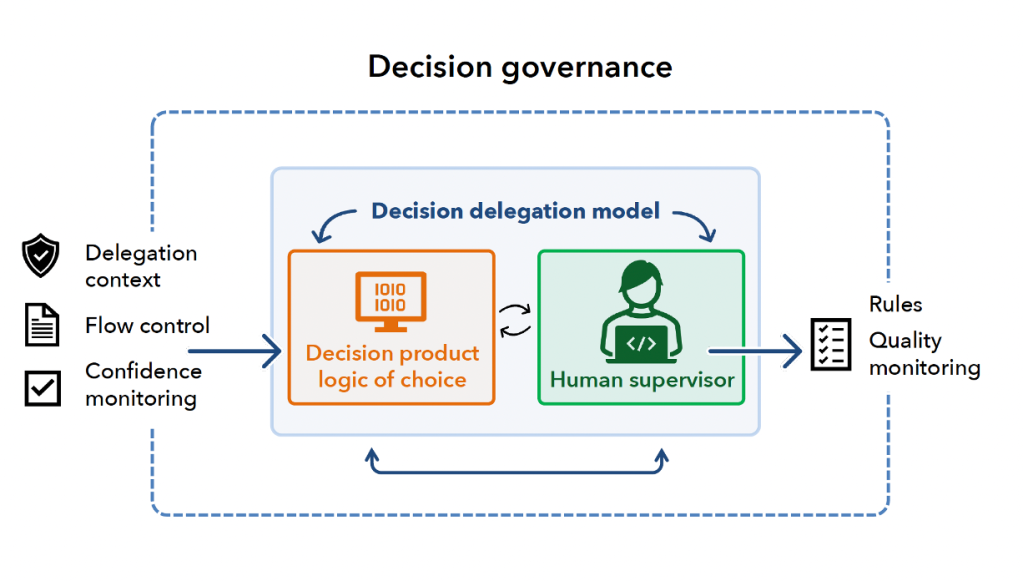

However, defining the decision unit is only the first step; organizations must then formalize the hand-offs between humans and machines through a robust delegation model. By using a principal-agent lens to structure these interactions as formal contracts, CIOs provide the architectural framework for business leaders to establish clear relay logic. These protocols determine exactly when an AI agent can execute a task and when it must transfer the baton back to a human supervisor. This is not just a technical requirement but a human-first benefit: by forcing managers to provide AI agents with clear context and specific instructions, organizations inadvertently fix the vague delegation habits that often plague human-only teams. This clarity ensures humans maintain the situation awareness necessary to intervene strategically whenever an AI encounters a complex edge case.

To ensure these delegated interactions remain aligned with corporate intent over time, a final layer of oversight is provided by the decision governance model. Unlike traditional governance, which is often episodic and manual, this model provides real-time regulation through control mechanisms, an architectural control layer designed specifically to oversee machine actors. By utilizing feedback loops and Trimodal Thinking (a cognitive framework for managing the dynamic switching between autonomous, manual and collaborative modes), the model ensures machine behavior stays aligned with human intent. This allows the CIO to monitor agency costs, the monitoring expenditures required to ensure machine behavior stays aligned with human intent, in real-time. This continuous oversight provides the final layer of trust needed to scale AI safely across the enterprise.

A roadmap for the decision-ready CIO

To begin the transition toward a decision-driven enterprise, CIOs should initiate a strategic architectural audit designed to move the organization beyond the limitations of binary on/off automation. The process begins by identifying and capturing invisible business-critical decisions, establishing a decision catalog alongside the existing data catalog. This effort surfaces where autonomous choice logic is currently implicit, allowing high-stakes decisions to be prioritized for formal, transparent governance.

Once surfaced, the focus shifts to formalizing hand-offs between humans and machines through an explicit delegation model. By defining specific human-in-the-loop or human-on-the-loop protocols, the CIO ensures that human operators maintain the responsibility and situational awareness necessary to manage complex edge cases, effectively reducing the risk of ‘automation bias’.

Finally, the architecture must establish value-risk traceability by creating a formal decision value chain. This ensures that every delegated action is bundled with the specific rules, logic and ethical constraints required for a real-time review. By making the black box of AI-driven choice fully transparent and inspectable, the enterprise can finally move its agentic capabilities out of pilot programs and into reliable, high-value production. Ultimately, this transition from rigid, bureaucratic structures to dynamic, decision-driven systems is what will define architectural readiness in the age of autonomous agents. Crucially, this is not about adding administrative weight; it is about replacing the hidden friction of vague delegation with a clear, machine-speed framework for trusted action.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?