You know the scene. The CFO opens the quarterly review. Revenue per employee. Operating margin. Cycle time.

Flat. Flat. Flat.

Meanwhile, every board member is reading about AI. The hype is everywhere. As CIO, the expectation is relentless — “Where’s our piece of the AI pie?”

And you have answers. You can show a killer demo. Copilot writing code in seconds. A chatbot deflecting 40% of support tickets. Marketing cranking out triple the content. The dashboards glow green with adoption metrics.

But the numbers don’t lie. The financials are flat.

Welcome to the Improvement Trap — the AI Plateau of 2026. A survey of nearly 6,000 executives across four countries found that while 70% of firms now use AI, over 80% report zero measurable productivity impact over the past three years — measured in hard numbers like sales per employee, not satisfaction surveys.

The industry’s instinct has been to blame targeting: We pointed AI at the wrong step. That’s part of it. But the deeper problem is older than any model:

We are automating processes that should be simplified or eliminated — not accelerated.

IT delivers value one way: Doing necessary tasks faster and cheaper than manual effort. There is zero value in doing a task faster if that task shouldn’t exist. There is zero value in automating a broken process. You just get a faster broken process.

Simplify before you automate

This is not a new idea. It’s the oldest idea in process improvement, and every generation of technology leaders has to relearn it. Mainframes, ERP, cloud, digital transformation — every wave produced the same mistake: Bolt new technology onto existing processes, then wonder why the results disappoint.

AI is the latest and most expensive version of this pattern.

The discipline predates every technology trend: First eliminate, then simplify, then automate. A process with twelve steps, six handoffs and three approval layers doesn’t need AI making each step faster. It needs someone asking why there are twelve steps, whether three can go and whether the approvals exist because of policy or habit. Only after the process is lean does automation deliver real value.

The small minority of firms showing real productivity gains — the 4–6% outliers in the NBER-affiliated research — aren’t using better models. They’re doing the process work first. Simplify, redesign, then automate the streamlined workflow. Everyone else is pouring concrete over a dirt road and calling it infrastructure.

There is zero value in doing a task faster if that task shouldn’t exist. There is zero value in automating a broken process. You just get a faster broken process.

It was always people, process and technology

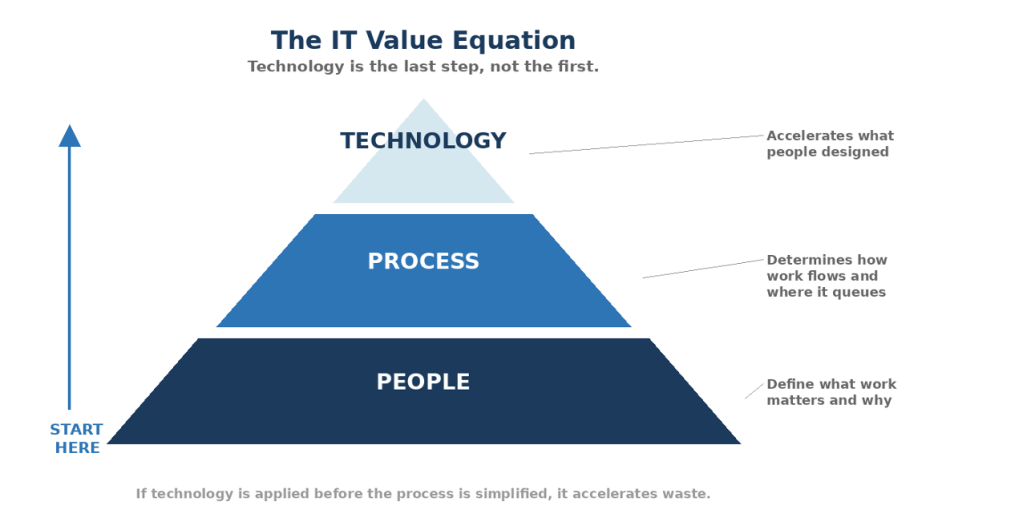

The technology industry spent two years treating AI like it changed the fundamental equation of IT value. It didn’t. The equation hasn’t changed since the first mainframe:

David Angelow

People define what work matters and why. Process determines how it flows, who touches it, where it queues. Technology accelerates what people designed. If the people haven’t rethought the work and the process hasn’t been simplified, technology is accelerating waste. Doesn’t matter how powerful it is.

The metrics confirm this. Controlled studies of experienced developers show a perception gap north of 40 points: They believed they were 20% faster with AI, but were actually 19% slower on complex tasks. An eight-month Harvard-linked study found AI tools led to longer days and broader task scope — not less work. People used AI to do more, not to stop doing anything.

These aren’t failures of AI. They’re failures of process. Nobody asked whether the work should be done differently before handing people a faster tool to do it the same way.

The productivity hallucination

The most dangerous metric in your building right now is user sentiment.

People feel faster because individual tasks feel smoother. But at the system level, throughput stays flat or degrades under the weight of Shadow Bottlenecks — the pileups that happen when one department’s AI-accelerated output floods another department’s unchanged manual process.

California Management Review’s “AI Productivity Blind Spot” puts a number on it: Leaders count time saved in local tasks but miss the extra load dumped on downstream functions — legal review, QA, compliance, data governance — that erases the gains. An MIT-affiliated study found the same pattern: A small fraction of AI pilots deliver measurable revenue impact. The rest stall between “great demo” and “shows up in the P&L.”

This creates a feedback loop you can ride for years: Teams report enthusiasm, dashboards turn green, investment feels justified — while cycle time, unit cost and throughput refuse to move.

We are pouring billions into local optimizations while the global constraint sits untouched — often in a department the CIO doesn’t even own.

Two traps and a blueprint

The Klarna Trap. Klarna automated the easy customer-service tickets and announced the AI was doing the work of 700 agents. Great headline. But the underlying process — how tickets got routed, escalated, resolved — didn’t change. The remaining tickets skewed hyper-complex. Reports emerged of continued hiring and growing escalation costs. Klarna didn’t have a technology problem. It had a process problem: It automated the surface without simplifying the system underneath.

The Chegg Trap. Chegg didn’t deploy AI badly — AI deployed against them. ChatGPT cannibalized their homework-help business. Chegg responded with a faster AI tutor. But the constraint had shifted from “how fast can we answer” to “why would anyone pay us to answer.” Revenue kept declining through 2025. Processes don’t just break because they’re internally inefficient — they become obsolete because the market moved. Chegg automated something that no longer needed to exist. No amount of speed fixes that.

The JPMorgan Blueprint. JPMorgan identified that legal and compliance review was the actual bottleneck in commercial lending — 360,000 hours of lawyer time annually on the critical path. They didn’t just add AI. They simplified first: Which clauses actually needed human review? Which were standard enough to automate entirely? They redesigned the workflow, eliminated unnecessary steps, then applied AI to the streamlined process. Lawyers got redeployed to genuine exceptions. The result showed up in the P&L: Faster closings, lower costs, real throughput improvement.

The difference wasn’t the AI. It was the process work that happened before the AI got deployed.

The math when you get it right

When you do the process work first, the numbers are hard to argue with.

Omega Healthcare identified manual document processing as their revenue-cycle constraint. Before deploying AI, they mapped the workflow, eliminated redundant steps, standardized inputs. Then they applied AI document understanding to the simplified process. Turnaround times cut 50%. 15,000 employee hours recovered per month. 99.5% accuracy — which eliminated the rework bottleneck that kills most AI pilots.

A Fortune 500 financial services firm targeted invoice processing: 45 minutes of manual touchpoints per invoice. They didn’t add AI to the existing process. They eliminated unnecessary approvals, consolidated handoffs, standardized exception handling. Then they automated what remained. Result: 6.75 minutes per invoice — 85% cycle-time reduction. They didn’t make the clerk faster. They removed most of the clerk’s unnecessary work first.

In both cases, the process simplification delivered as much value as the AI itself. That’s the point most organizations keep missing.

The Monday morning audit

Take your top five AI initiatives. Before asking whether the AI is working, ask whether the process deserves to be automated at all.

| Before You Automate | Then Ask | Red Flag |

| 1. Should this process exist at all? | What happens if we stop doing it? | Nobody can name the business outcome it serves. |

| 2. Can it be simplified first? | How many steps and approvals can we eliminate? | AI layered onto the current process with no redesign. |

| 3. Is AI removing work or generating it? | Does it eliminate a task or add a review step? | People spend time reviewing, correcting and governing AI output. |

| 4. Where’s the P&L impact? | Cycle time, unit cost or headcount — measurable in two quarters? | Success defined by adoption or sentiment. |

The test: If you can’t explain what was simplified or eliminated before AI was applied, you’re automating waste. That isn’t transformation. It’s expensive inertia.

The strategic sequence

Wave 1 — Simplify. Before any AI deployment, map the end-to-end process. Eliminate steps that exist because of habit. Remove approvals that add cycle time without adding value. Consolidate handoffs. Not glamorous work. It’s the work that makes everything after it pay off.

Wave 2 — Automate the Constraint. Once the process is lean, identify where work actually queues and apply AI there. Document processing, compliance triage, claims adjudication. BCG documents carriers getting real-time resolution on 70% of simple claims and cutting costs 30–50% this way. If AI doesn’t take work off the critical path of a simplified process, it’s not a priority.

Wave 3 — Prepare the People. The biggest bottlenecks usually sit under the COO, CFO or General Counsel — not the CIO. The constraint is organizational, not technical. Stop asking vendors for roadmaps. Start asking department heads for cycle-time data and their willingness to redesign how their teams work. If they can’t produce the data or won’t change the process, they’re not ready for AI — and you’re not ready to invest.

Reassess quarterly. Constraints migrate. The bottleneck you relieve in Q1 creates a new one in Q2. If your AI investment map hasn’t changed in two quarters, you’re funding yesterday’s constraint.

Monday morning action plan

So what do you do Monday morning? Three things.

- Update your criteria for evaluating AI projects. Use process impact as the lens, not technology capability.

- For every initiative, ask: “Is the scope broad enough to address the constraint end-to-end — or are we just speeding up one step while the bottleneck moves downstream?” Review your AI portfolio now. Every project should pass a simple test: Was the process simplified before AI was applied? If not, either redesign the process first or kill the project.

- Have the discipline to simplify before you automate — and the courage to kill projects that are automating processes that shouldn’t exist.

It comes down to one question:

“Did we simplify the process before we automated it — and can we prove the investment moved the constraint?”

If the answer is no, you’re funding the hallucination. If the answer is yes, you’ve remembered something this industry keeps forgetting: Technology is the last step, not the first. It was always People, process and technology. AI didn’t change the order. It just raised the cost of getting it wrong.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?