For the last 20 years, the economics of software were beautifully boring. The business model was predicated on a simple truth: Build once and sell infinitely.

In the traditional SaaS era, the marginal cost of adding a new user was negligible. Whether a customer logged in once a month or once a minute, the cost to the vendor, a tiny slice of database storage and server capacity, remained relatively flat and predictable. This predictability allowed companies to scale aggressively, offering unlimited seats and all-you-can-eat plans with confidence. Margins were high, costs were fixed and scale was the ultimate solvent for all problems.

Generative AI has broken that model.

We have entered a new era where software has a variable cost of goods sold. Every time a user interacts with a Gen AI feature, cash leaves the building. It leaves in the form of computing power, database searches and third-party API fees. Engagement, once the North Star metric for product success, has become a double-edged sword. In this new reality, a highly engaged user is no longer guaranteed to be your most profitable customer. They might actually be your biggest liability.

I call this the AI Volatility Tax. It is the silent erosion of gross margins caused by unmanaged, variable-cost intelligence, and it is currently the single biggest blind spot in enterprise innovation budgets. When inference costs rise faster than revenue per user, model performance becomes irrelevant because the business collapses before the model does.

FOMO and the rush to negative margins

Why are companies rushing into this trap? The driver is fear of missing out (FOMO).

The pressure from boards to “do AI” is suffocating. This urgency triggers a collective suspension of disbelief regarding unit economics. We saw this in the dot-com bubble and we are seeing it again. Leadership teams are so terrified of being perceived as laggards that they are greenlighting features that are fundamentally structurally unprofitable.

I recently advised a legal-tech firm that had integrated a powerful large language model (LLM) to help lawyers summarize case files. The product worked beautifully. It was accurate, fast and the users loved it. Usage skyrocketed post-launch.

But when the first quarterly financial review arrived, the celebration ended. The team had routed every single query, from complex legal reasoning to simple keyword searches, through a high-end, reasoning-heavy model. When we modeled the unit economics, the math was brutal. For every $1.00 of subscription revenue the feature generated, the company was spending $1.20 on processing and infrastructure costs.

They had achieved product-market fit but failed business model fit. They were effectively subsidizing their customers’ work with their own venture capital. This wasn’t a software business anymore; it was a charity for law firms. This is not innovation. It is wealth transfer.

Sequoia Capital has highlighted this massive gap between the billions spent on AI infrastructure and the actual revenue realized by applications, dubbing it “AI’s $600B Question”. The gap exists because too many organizations are treating AI as a feature to be bolted onto existing fixed-price contracts rather than a consumable resource that requires a fundamentally different pricing and architectural strategy.

The verification penalty

There is another, more insidious layer to this inflation that rarely appears on the architecture diagram: The human cost of verification. We often assume AI output is “free” once the model is paid for, but in high-stakes B2B environments, that is a dangerous fallacy.

I worked with a customer support platform that implemented an AI agent to draft responses to complex technical tickets. The goal was to reduce agent handling time by 50 percent. The model was expensive, but the projected labor savings were massive.

Six months later, the savings hadn’t materialized. In fact, handling time had gone up.

When we audited the workflow, we found the problem. The AI was generating responses that were 90 percent accurate. That sounds good, but it meant 10 percent of the responses contained subtle, hallucinated technical errors that could cause data loss for customers. Because the agents couldn’t trust the AI blindly, they had to read and verify every single line of the generated text against the documentation.

It turns out that reading and fact-checking a confident-but-wrong paragraph often takes longer than writing the correct answer from scratch. We had traded “creation labor” for “verification labor,” and verification is harder to scale. The AI wasn’t a replacement for the human; it was a burden on the human. This “verification penalty” acts as a hidden tax on every transaction, eating away the margin gains we thought we had secured.

Richard Ewing

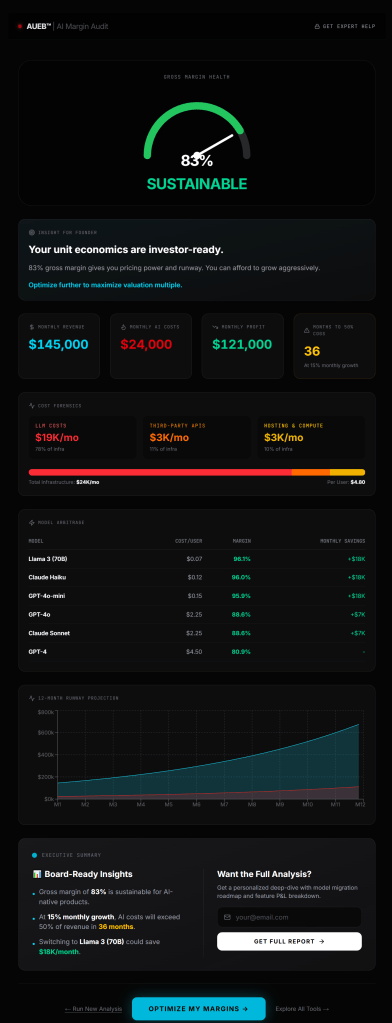

The unit economics trap. This diagnostic from the AI Margin Audit breaks down the “variable cost of goods sold” for AI features. It highlights how infrastructure costs (LLM tokens, vector DBs, third-party APIs) can silently erode gross margins if not governed.

The vendor trap: Why models are not commodities

Compounding this volatility is the uncomfortable fact that you do not own the supply chain. In traditional software, you owned the server or rented a standardized slice of computing power. You knew exactly what a CPU cycle cost.

In the Gen AI ecosystem, you are renting intelligence from a fluctuating oligopoly. The price of a processing token is not set by physics. It is set by business strategy. A model provider can change their pricing structure, deprecate a model or alter their rate limits with little notice.

I have seen product roadmaps crumble overnight because a provider shifted their API pricing, instantly turning a profitable feature into a loss leader. This introduces a new layer of vendor risk that most product managers are ill-equipped to handle. We are used to worrying about uptime but now we have to worry about yield.

If your entire product strategy relies on a specific proprietary model maintaining its current price point, you don’t have a strategy. You have a dependency.

The multiplier effect of autonomous agents

If basic “chat with your data” features are expensive, the next wave of AI, autonomous agents, threatens to multiply this volatility tax exponentially.

Agents are designed to be independent. They don’t just answer a question. They reason, plan, execute tools and critique their own work. A single user prompt like “Plan my marketing campaign” might trigger a chain of 50 or 100 internal reasoning loops. The agent might search the web, read a PDF, write a draft, critique the draft and rewrite it, all while the billing meter is spinning.

In a traditional software environment, loops are cheap. In a Gen AI environment, loops are expensive. Without strict governance, an agent getting stuck in a reasoning loop is the cloud equivalent of leaving a tap running in a drought.

Governance is not just about safety or bias. As the National Institute of Standards and Technology (NIST) emphasizes in their AI Risk Management Framework, it is essential for economic viability. Organizations that fail to implement circuit breakers and cost caps on their autonomous workflows risk waking up to cloud bills that obliterate their quarterly earnings.

The governance imperative: Dynamic orchestration

So how do winning organizations avoid this tax? They stop treating intelligence as a monolith.

The mistake the legal-tech firm made was using a PhD-level model to do intern-level work. Not every query requires the most expensive, smartest model on the market.

The emerging best practice is dynamic model orchestration. This strategy treats intelligence like a supply chain. When a request comes in, a lightweight router assesses the complexity of the task.

- Is it a simple classification task? Route it to a cheap, fast, specialized model.

- Is it a summarization task? Route it to a mid-tier workhorse model.

- Is it complex, multi-step reasoning? Only then do we authorize the expense of a frontier model.

By matching the cost of the model to the value of the query, organizations can protect their margins. Gartner research on AI FinOps confirms that organizations implementing this type of cost governance early are able to accelerate adoption responsibly, while those who ignore it are often forced to shut down popular features due to runaway costs.

This article is published as part of the Foundry Expert Contributor Network.

Want to join?